热门标签

热门文章

- 1如何在浏览器Web前端在线编辑PPT幻灯片?_前端实现可编辑 ppt

- 2IDEA如何回退提交的git代码_idea git撤回提交

- 3电脑安全模式怎么进?3种方式教会你!_电脑网络安全模式

- 4智能合约漏洞之整型溢出_erc20智能合约整数溢出怎么解决

- 5CDH集成Kerberos配置_cdh 的keytab 在哪里

- 6LRU、LFU_lfu、lru

- 7【Stable Diffusion】ComfyUI提示词预设插件(附插件)_comfyui风格提示词选择器

- 8Python--pandas导入导出数据_pandas将含有nan数据的行导出

- 9学习笔记——交通安全分析17

- 10Android-13-BroadcastReceiver(广播接收者)_android 13 broadcastreceiver

当前位置: article > 正文

【支持中文】stable-diffusion-3安装部署教程-SD3_stable diffusion3部署

作者:黑客灵魂 | 2024-08-02 10:02:39

赞

踩

stable diffusion3部署

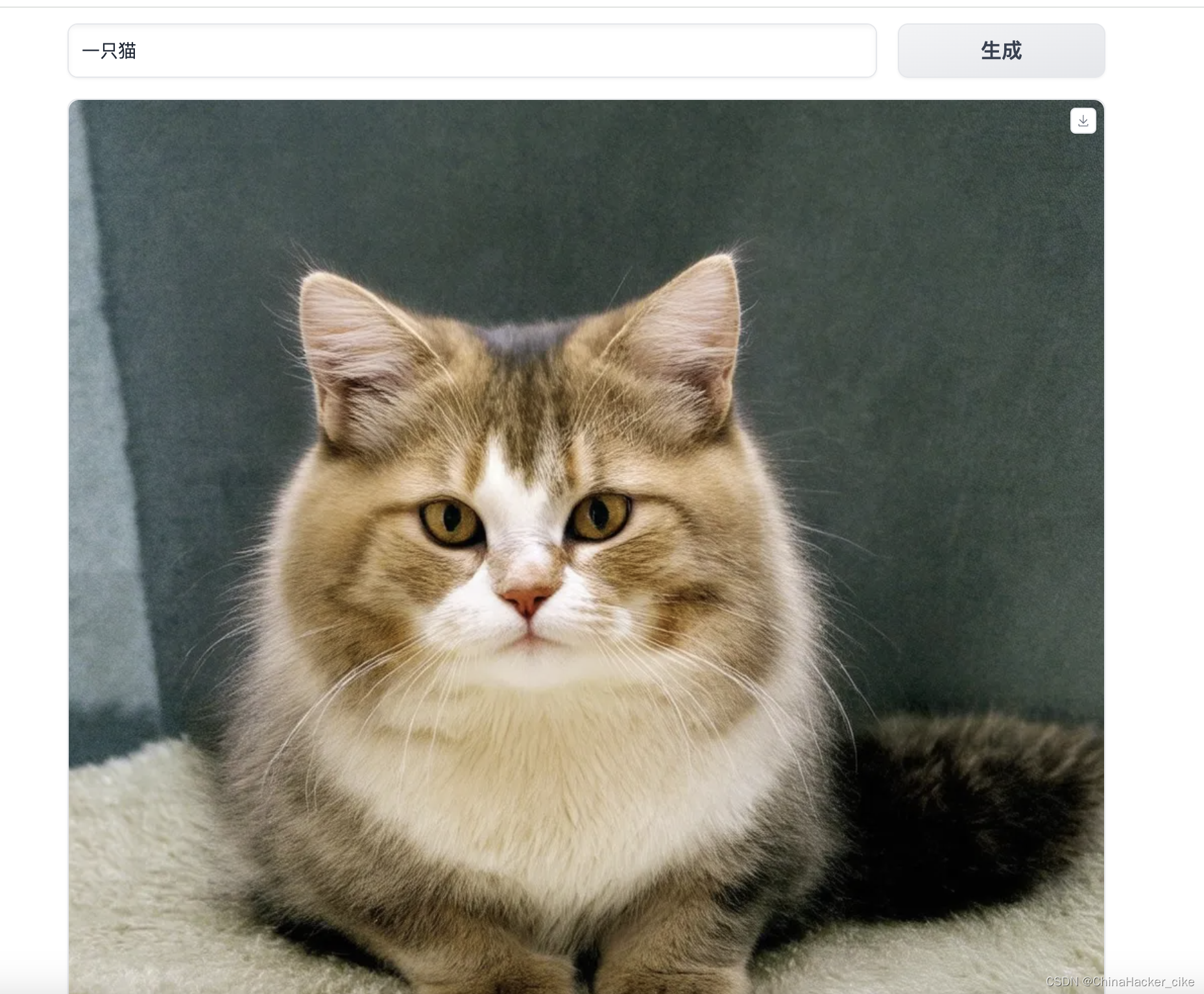

运行界面

一、下载模型

注意是下载的是stable-diffusion-3-medium-diffusers

git clone https://www.modelscope.cn/AI-ModelScope/stable-diffusion-3-medium-diffusers.git二、搭建基础环境

- conda install pytorch torchvision torchaudio pytorch-cuda=12.1 -c pytorch -c nvidia

-

- # 或者pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121

-

- pip install gradio

-

- pip install spaces

三、安装SD3的环境

由于pip环境的diffusers和transformers还不支持SD3,所以需要编译安装

- git clone https://github.com/huggingface/diffusers.git

- git clone https://github.com/huggingface/transformers

-

- cd diffusers/

- python setup.py develop

- pip install -e .

-

- cd ../transformers

- python setup.py develop

- pip install -e .

三、运行SD3 DEMO

将下面的代码保存为demo.py,再直接python demo.py即可

翻译用到了百度的接口,可以换成自己的AK/SK,不喜欢可以去掉

- import gradio as gr

- import numpy as np

- import random

- import torch

- from diffusers import StableDiffusion3Pipeline, SD3Transformer2DModel, FlowMatchEulerDiscreteScheduler

- import spaces

- import requests

- import hashlib

- import random

- import json

-

- device = "cuda" if torch.cuda.is_available() else "cpu"

- dtype = torch.float16

-

- repo = "/你的模型路径/stable-diffusion-3-medium-diffusers"

- pipe = StableDiffusion3Pipeline.from_pretrained(repo, torch_dtype=torch.float16).to(device)

-

- MAX_SEED = np.iinfo(np.int32).max

- MAX_IMAGE_SIZE = 1344

-

- def translate_baidu(query, from_lang='auto', to_lang='en'):

- base_url = "https://fanyi-api.baidu.com/api/trans/vip/translate"

- appid = '你的APPID'

- secret_key = '你的SK'

- salt = str(random.randint(32768, 65536))

- sign = appid + query + salt + secret_key

- sign = hashlib.md5(sign.encode()).hexdigest()

-

- params = {

- 'q': query,

- 'from': from_lang,

- 'to': to_lang,

- 'appid': appid,

- 'salt': salt,

- 'sign': sign

- }

-

- response = requests.get(base_url, params=params)

- if response.status_code == 200:

- result = response.json()

- if "trans_result" in result:

- return result["trans_result"][0]["dst"]

- else:

- return result

- else:

- return f"Error: {response.status_code}"

-

- @spaces.GPU

- def infer(prompt, negative_prompt, seed, randomize_seed, width, height, guidance_scale, num_inference_steps, progress=gr.Progress(track_tqdm=True)):

-

- if randomize_seed:

- seed = random.randint(0, MAX_SEED)

-

- generator = torch.Generator().manual_seed(seed)

-

- print(prompt)

- prompt = translate_baidu(prompt, 'zh', 'en')

- print(prompt)

-

- image = pipe(

- prompt = prompt,

- negative_prompt = negative_prompt,

- guidance_scale = guidance_scale,

- num_inference_steps = num_inference_steps,

- width = width,

- height = height,

- generator = generator

- ).images[0]

-

- return image, seed

-

- examples = [

-

- ]

-

- css="""

- #col-container {

- margin: 0 auto;

- max-width: 800px;

- }

- """

-

- with gr.Blocks(css=css) as demo:

-

- with gr.Column(elem_id="col-container"):

- gr.Markdown(f"""

- ### Stable Diffusion 3 测试

- #### By 你的名字

- """)

-

- with gr.Row():

-

- prompt = gr.Text(

- label="提示词",

- show_label=False,

- max_lines=1,

- placeholder="请输入提示词",

- container=False,

- )

-

- run_button = gr.Button("生成", scale=0)

-

- result = gr.Image(label="Result", show_label=False)

-

- with gr.Accordion("更多参数", open=False):

-

- negative_prompt = gr.Text(

- label="负面提示词",

- max_lines=1,

- placeholder="请输入负面提示词",

- )

-

- seed = gr.Slider(

- label="Seed",

- minimum=0,

- maximum=MAX_SEED,

- step=1,

- value=0,

- )

-

- randomize_seed = gr.Checkbox(label="随机种子", value=True)

-

- with gr.Row():

-

- width = gr.Slider(

- label="宽",

- minimum=256,

- maximum=MAX_IMAGE_SIZE,

- step=64,

- value=1024,

- )

-

- height = gr.Slider(

- label="高",

- minimum=256,

- maximum=MAX_IMAGE_SIZE,

- step=64,

- value=1024,

- )

-

- with gr.Row():

-

- guidance_scale = gr.Slider(

- label="Guidance scale",

- minimum=0.0,

- maximum=10.0,

- step=0.1,

- value=5.0,

- )

-

- num_inference_steps = gr.Slider(

- label="迭代步数",

- minimum=1,

- maximum=50,

- step=1,

- value=28,

- )

-

-

- gr.on(

- triggers=[run_button.click, prompt.submit, negative_prompt.submit],

- fn = infer,

- inputs = [prompt, negative_prompt, seed, randomize_seed, width, height, guidance_scale, num_inference_steps],

- outputs = [result, seed]

- )

-

- demo.launch(server_name="0.0.0.0", server_port=7862, show_error=True)

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/黑客灵魂/article/detail/918224

推荐阅读

相关标签