热门标签

热门文章

- 1推荐开源项目:Vue文档编辑器

- 2物联网与大数据:概念、区别与关联

- 3windows环境下安装zookeeper

- 4物联网实战--驱动篇之(六)4G通讯(Air780E)_移植air780e 驱动4g模块

- 5顺丰科技AI产品经理面经_顺丰ai面试怎么回答

- 6bpython ipython_IPython使用学习笔记

- 7MacOS 安装Mysql、Navicat_mac m2安装mysql以及navicat

- 8在IDEA上布置jdbc 并实现sql语句的相关操作_idea中运行jdbc.sql

- 9用户画像项目两大核心内容之一“one_id”(含SQL实现代码)_one id实现方式

- 10机器视觉学习(十四)—— 自定义人脸识别(一)_机器视觉实时人脸识别csdn

当前位置: article > 正文

python爬虫学习-requests模块和selenium模块_selenium和request

作者:空白诗007 | 2024-07-17 11:19:46

赞

踩

selenium和request

一、requests模块

概念:

python中原生的一款基于网络请求的模块,功能非常强大,简单便捷,效率极高。

作用:模拟浏览器发请求。

如何使用(request模块的编码流程):

- 指定url

- 发起请求

- 获取响应数据

- 持久化存储

环境安装:

pip Install requests

实战编码:

- 爬取搜狗首页的页面数据

import requests

if __name__ == "__main__":

# step 1: 指定url

url = "https://www.sogou.com/"

# step 2: 发起请求,get方法会返回一个响应对象

response = requests.get(url=url)

# step 3: 获取响应数据,text方法返回字符串形式的响应数据

page_text = response.text

print(page_text)

# step 4: 持久化存储

with open('./sogou.html','w',encoding='utf-8') as fp:

fp.write(page_text)

print("爬取数据结束")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 简易网页采集器

UA检测

UA伪装

import requests if __name__ == "__main__": # UA伪装:将对应的User-Agent封装到一个字典中 # Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36 headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36' } # step 1: 指定url url = "https://www.sogou.com/web" # 处理url携带的参数:封装到字典中 kw = input('enter a word:') param = { 'query': kw } # step 2: 对指定的url发起的请求对应的url是携带参数的,并且请求过程中处理了参数,get方法会返回一个响应对象 response = requests.get(url=url, params=param, headers=headers) # step 3: 获取响应数据,text方法返回字符串形式的响应数据 page_text = response.text print(page_text) # step 4: 持久化存储 fileName = kw + '.html' with open(fileName, 'w', encoding='utf-8') as fp: fp.write(page_text) print(fileName, "保存成功")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 破解百度翻译

post请求(携带了参数)

响应数据是一组json数据

import requests import json if __name__ == "__main__": # step 1: 指定url post_url = 'https://fanyi.baidu.com/sug' # post请求参数处理(同get请求一致) word = input('enter a word:') data = { 'kw': word } # UA伪装 headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36' } # 请求发送 response = requests.post(url=post_url, data=data, headers=headers) # step 3: 获取响应数据,json方法返回的是obj(如果确认响应数据是json类型的,才可以使用json) dic_obj = response.json() print(dic_obj) # step 4: 持久化存储 fileName = word + ".json" fp = open(fileName, 'w', encoding='utf-8') json.dump(dic_obj, fp=fp, ensure_ascii=False) print("over")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 爬取豆瓣电影分类排行榜

import requests import json if __name__ == "__main__": url = 'https://movie.douban.com/j/chart/top_list' param = { 'type': '24', 'interval_id': '100:90', 'action': '', 'start': '0', # 从库中的第几部电影去取 'limit': '20' # 一次取出的个数 } headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36' } response=requests.get(url=url,params=param,headers=headers) list_data=response.json() fp=open('./douban.json','w',encoding='utf-8') json.dump(list_data,fp=fp,ensure_ascii=False) print("over")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

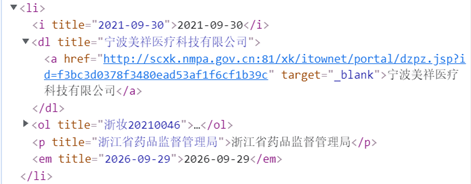

- 爬取国家药品监督管理总局中基于中华人民共和国化妆品生产许可证相关数http://scxk.nmpa.gov.cn:81/xk/

动态加载数据

首页中对应的企业信息数据是通过ajax请求到的

import requests

if __name__ == "__main__":

# UA伪装:将对应的User-Agent封装到一个字典中

# Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36'

}

url = "http://scxk.nmpa.gov.cn:81/xk/"

page_text = requests.get(url=url,headers=headers).text

with open('./huazhuangpin.html','w',encoding='utf-8') as fp:

fp.write(page_text)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

UA:

User-Agent(请求载体的身份标识)

UA检测:

- 门户网站的服务器会检测对应请求的载体身份标识,如果检测到请求的载体身份标识为某一款浏览器,说明该请求是一个正常的请求。但是,如果检测到请求的载体身份标识不是基于某一款浏览器的,则表示该请求为不正常的请求(爬虫),则服务器端就很有可能拒绝该次请求。

UA伪装: - 让爬虫对应的请求载体身份标识伪装成某一款浏览器。

二、selenium模块

问题:

selenium模块和爬虫之间具有怎样的关联?

- 便捷的获取网站中动态加载的数据

- 便捷实现模拟登录

什么是selenium模块?

- 基于浏览器自动化的一个模块。

selenium使用流程:

- 环境安装: pip install selenium

- 下载一个浏览器的驱动程序

下载路径:http://chromedriver.storage.googleapis.com/index.html - 实例化一个浏览器对象

- 编写基于浏览器自动化的操作代码

由于数据是动态加载的,在all中数据包做全局搜索:

- 选中一个数据包,按Ctrl+F

from selenium import webdriver from lxml import etree from time import sleep # 实例化一个浏览器对象(传入浏览器的驱动程序) bro = webdriver.Chrome(executable_path=r'E:\Downloads\chromedriver.exe') # 让浏览器发起一个指定url对应请求 bro.get("http://scxk.nmpa.gov.cn:81/xk/") # 获取浏览器当前页面的页面源码数据 page_text = bro.page_source # 解析企业名称 tree = etree.HTML(page_text) li_list = tree.xpath('//ul[@id="gzlist"]/li') #'//ur[@id="gzlist"]/li' for li in li_list: name = li.xpath('./dl/@title')[0] print(name) sleep(5) bro.quit()

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

python3.9 lxml引入etree会报错

解决方法:lxml4.2.5版本带有etree模块,且该版本lxml支持python3.7.4版本

声明:本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:【wpsshop博客】

推荐阅读

相关标签