热门标签

热门文章

- 1漏洞扫描工具AWVS介绍及安装教程(非常详细)从零基础入门到精通,看完这一篇就够了_awvs2024

- 2【金九银十】Android高级工程师面试实战,论程序员成长的正确姿势_你进阶的路上缺乏方向,可以点击我的【github】加入我们的圈子和安卓开发者们一起

- 3植物大战僵尸新植物僵尸(上)_火炬树桩僵尸 这次射手僵尸会被点燃了 谨慎 坚果的韧性会更低

- 4探索大模型AIGC的未来:从体验到展望

- 5大数据之Hadoop的特点是什么?有什么优缺点?有哪些发行版本?_简述hadoop的主要特点和优势

- 6Scrapy框架与其他Python爬虫库的对比分析

- 7Spring框架学习总结【狂神说Java】_shouldanswerwithtrue

- 8重磅!Stable Diffusion 3正式开源(附安装方法和下载地址)_stable diffusion 3 开源

- 9Python实现微信自动回复收到_python微信自动回复

- 10Python 实现文本共现网络分析_python共现分析

当前位置: article > 正文

【人工智能项目】Bert实现文本领域分类_bert h5 分类使用

作者:爱喝兽奶帝天荒 | 2024-06-25 00:07:52

赞

踩

bert h5 分类使用

【人工智能项目】Bert实现文本领域分类

客官里面请,本次用bert实现文本领域的分类任务。本次在google colab进行实验的。那么,走起!!!

机器环境

!nvidia-smi

- 1

导包

#! -*- coding:utf-8 -*- import re, os, json, codecs, gc import pandas as pd import numpy as np import matplotlib.pyplot as plt import seaborn as sns import os import time import datetime from sklearn.model_selection import train_test_split from sklearn.preprocessing import LabelEncoder from sklearn.utils import class_weight as cw from keras import Sequential from keras.models import Model from keras.layers import LSTM,Activation,Dense,Dropout,Input,Embedding,BatchNormalization,Add,concatenate,Flatten from keras.layers import Conv1D,Conv2D,Convolution1D,MaxPool1D,SeparableConv1D,SpatialDropout1D,GlobalAvgPool1D,GlobalMaxPool1D,GlobalMaxPooling1D from keras.layers.pooling import _GlobalPooling1D from keras.layers import MaxPooling2D,GlobalMaxPooling2D,GlobalAveragePooling2D from keras.optimizers import RMSprop,Adam from keras.preprocessing.text import Tokenizer from keras.preprocessing import sequence from keras.utils import to_categorical from keras.callbacks import EarlyStopping from keras.callbacks import ModelCheckpoint from keras.callbacks import ReduceLROnPlateau %matplotlib inline import warnings warnings.filterwarnings("ignore")

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

读取文件

train_dataset_path = "train.txt"

test_dataset_path = "test.txt"

label_dataset_path = "class.txt"

train_df = pd.read_csv(train_dataset_path,encoding="utf-8",sep='\t',names=["text","label"])

test_df = pd.read_csv(test_dataset_path,encoding="utf-8",sep = '\t',names=["text","label"])

label_df = pd.read_csv(label_dataset_path,encoding="utf-8",header=None,sep = '\t')

- 1

- 2

- 3

- 4

- 5

- 6

- 7

train_df

- 1

test_df

- 1

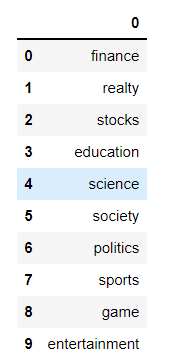

label_df

- 1

去除无标签的数据

train_df.dropna(axis=0, how='any', inplace=True)

- 1

清洗文本数据

import re

def filter(text):

text = re.sub("[A-Za-z0-9\!\=\?\%\[\]\,\(\)\>\<:<\/#\. -----\_]", "", text)

text = text.replace('图片', '')

text = text.replace('\xa0', '') # 删除nbsp

# new

r1 = "\\【.*?】+|\\《.*?》+|\\#.*?#+|[.!/_,$&%^*()<>+""'?@|:~{}#]+|[——!\\\,。=?、:“”‘’¥……()《》【】]"

cleanr = re.compile('<.*?>')

text = re.sub(cleanr, ' ', text) #去除html标签

text = re.sub(r1,'',text)

text = text.strip()

return text

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

def clean_text(data):

data['text'] = data['text'].apply(lambda x: filter(x))

return data

train = clean_text(train_df)

test = clean_text(test_df)

- 1

- 2

- 3

- 4

- 5

- 6

标签统计

sns.countplot(train_df["label"])

plt.xlabel("Label")

plt.title("News sentiment analysis")

- 1

- 2

- 3

最终数据集

train_df["ocr"] = train_df["text"]

test_df["ocr"] = test_df["text"]

- 1

- 2

- 3

train_df = train_df[:45000]

- 1

train_df.shape

- 1

安装bert

!pip install keras_bert

- 1

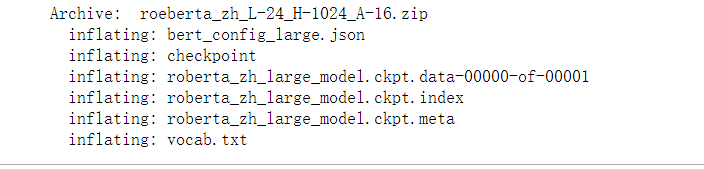

下载bert的预训练好的权重语料。

!wget -c https://storage.googleapis.com/chineseglue/pretrain_models/roeberta_zh_L-24_H-1024_A-16.zip

- 1

!unzip roeberta_zh_L-24_H-1024_A-16.zip

- 1

训练

#! -*- coding:utf-8 -*- import re, os, json, codecs, gc import numpy as np import pandas as pd from random import choice from sklearn.preprocessing import LabelEncoder from sklearn.model_selection import KFold from keras_bert import load_trained_model_from_checkpoint, Tokenizer from keras.layers import * from keras.callbacks import * from keras.models import Model import keras.backend as K from keras.optimizers import Adam maxlen = 512 config_path = './bert_config_large.json' # checkpoint_path = '/export/home/liuyuzhong/kaggle/bert/chinese_L-12_H-768_A-12/bert_model.ckpt' checkpoint_path = './roberta_zh_large_model.ckpt' dict_path = './vocab.txt' token_dict = {} with codecs.open(dict_path, 'r', 'utf8') as reader: for line in reader: token = line.strip() token_dict[token] = len(token_dict) class OurTokenizer(Tokenizer): def _tokenize(self, text): R = [] for c in text: if c in self._token_dict: R.append(c) elif self._is_space(c): R.append('[unused1]') # space类用未经训练的[unused1]表示 else: R.append('[UNK]') # 剩余的字符是[UNK] return R tokenizer = OurTokenizer(token_dict) def seq_padding(X, padding=0): L = [len(x) for x in X] ML = max(L) return np.array([ np.concatenate([x, [padding] * (ML - len(x))]) if len(x) < ML else x for x in X ]) class data_generator: def __init__(self, data, batch_size=32, shuffle=True): self.data = data self.batch_size = batch_size self.shuffle = shuffle self.steps = len(self.data) // self.batch_size if len(self.data) % self.batch_size != 0: self.steps += 1 def __len__(self): return self.steps def __iter__(self): while True: idxs = list(range(len(self.data))) if self.shuffle: np.random.shuffle(idxs) X1, X2, Y = [], [], [] for i in idxs: d = self.data[i] text = d[0][:maxlen] x1, x2 = tokenizer.encode(first=text) y = d[1] X1.append(x1) X2.append(x2) Y.append([y]) if len(X1) == self.batch_size or i == idxs[-1]: X1 = seq_padding(X1) X2 = seq_padding(X2) Y = seq_padding(Y) yield [X1, X2], Y[:, 0, :] [X1, X2, Y] = [], [], [] from keras.metrics import top_k_categorical_accuracy def acc_top5(y_true, y_pred): return top_k_categorical_accuracy(y_true, y_pred, k=5) def build_bert(nclass): bert_model = load_trained_model_from_checkpoint(config_path, checkpoint_path, seq_len=None) for l in bert_model.layers: l.trainable = True x1_in = Input(shape=(None,)) x2_in = Input(shape=(None,)) x = bert_model([x1_in, x2_in]) x = Lambda(lambda x: x[:, 0])(x) p = Dense(nclass, activation='softmax')(x) model = Model([x1_in, x2_in], p) model.compile(loss='categorical_crossentropy', optimizer=Adam(1e-5), metrics=['accuracy', acc_top5]) print(model.summary()) return model

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

from keras.utils import to_categorical

DATA_LIST = []

# 改

for data_row in train_df.iloc[:].itertuples():

DATA_LIST.append((data_row.ocr, to_categorical(data_row.label, 10)))

DATA_LIST = np.array(DATA_LIST)

DATA_LIST_TEST = []

for data_row in test_df.iloc[:].itertuples():

DATA_LIST_TEST.append((data_row.ocr, to_categorical(0, 10)))

DATA_LIST_TEST = np.array(DATA_LIST_TEST)

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

def run_cv(nfold, data, data_label, data_test): kf = KFold(n_splits=nfold, shuffle=True, random_state=520).split(data) # 改 train_model_pred = np.zeros((len(data), 10)) test_model_pred = np.zeros((len(data_test), 10)) for i, (train_fold, test_fold) in enumerate(kf): X_train, X_valid, = data[train_fold, :], data[test_fold, :] # 改 model = build_bert(10) early_stopping = EarlyStopping(monitor='val_acc', patience=3) plateau = ReduceLROnPlateau(monitor="val_acc", verbose=1, mode='max', factor=0.5, patience=2) checkpoint = ModelCheckpoint('./bert_dump/' + str(i) + '.hdf5', monitor='val_acc', verbose=2, save_best_only=True, mode='max',save_weights_only=True) train_D = data_generator(X_train, shuffle=True) valid_D = data_generator(X_valid, shuffle=True) test_D = data_generator(data_test, shuffle=False) history = model.fit_generator( train_D.__iter__(), steps_per_epoch=len(train_D), epochs=3, validation_data=valid_D.__iter__(), validation_steps=len(valid_D), callbacks=[early_stopping, plateau, checkpoint], ) model.save_weights("model2.h5") # model.load_weights('./bert_dump/' + str(i) + '.hdf5') model.save('model.h5') # HDF5文件,pip install h5py # return model train_model_pred[test_fold, :] = model.predict_generator(valid_D.__iter__(), steps=len(valid_D),verbose=1) test_model_pred += model.predict_generator(test_D.__iter__(), steps=len(test_D),verbose=1) del model; gc.collect() K.clear_session() # break return train_model_pred, test_model_pred,history

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

train_model_pred, test_model_pred,history = run_cv(2, DATA_LIST, None, DATA_LIST_TEST)

- 1

# 绘制训练过程中的 loss 和 acc 变化曲线 import matplotlib.pyplot as plt %matplotlib inline def history_plot(history_fit): plt.figure(figsize=(12,6)) # summarize history for accuracy plt.subplot(121) plt.plot(history_fit.history["accuracy"]) plt.plot(history_fit.history["val_accuracy"]) plt.title("model accuracy") plt.ylabel("accuracy") plt.xlabel("epoch") plt.legend(["train", "valid"], loc="upper left") # summarize history for loss plt.subplot(122) plt.plot(history_fit.history["loss"]) plt.plot(history_fit.history["val_loss"]) plt.title("model loss") plt.ylabel("loss") plt.xlabel("epoch") plt.legend(["train", "test"], loc="upper left") plt.show()

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

history_plot(history)

- 1

预测

test_pred = [np.argmax(x) for x in test_model_pred]

test_df['labels'] = test_pred

- 1

- 2

test_df['labels']

- 1

test_df

- 1

from sklearn.metrics import classification_report

print(classification_report(test_df["label"], test_df["labels"]))

- 1

- 2

小结

本次那就到此结束了!!!我们下回不见不散!!!

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/爱喝兽奶帝天荒/article/detail/754393

推荐阅读

相关标签