热门标签

热门文章

- 1GLIGEN:diffusion+目标检测,控制生成对象的空间位置

- 2npm上传发布自定义组件超详细流程_使用vue3和element plus 自定义组件库进行发布

- 3已解决nltk.download(‘stopwords‘) [nltk_data] Error loading stopwords: <urlopen error [Errno 11004] [nlt_nltk.download('stopwords')

- 4conda 国内源配置_conda index搭建本地源

- 5flex布局——align-items属性垂直之共有flex-start、center、flex-end& justify-content属性水平之space-around、space-between_align-items: flex-end;

- 65.5.1、【AI技术新纪元:Spring AI解码】Azure AI 服务配置

- 7人工智能:通过Python实现语音合成的案例_python的人工智能语音应用案例有哪些

- 8Android 12 上焕然一新的小组件: 美观、便捷和实用 | 开发者说·DTalk

- 9版本匹配指南:Numpy版本和Python版本的对应关系_numpy1.26对应python

- 10银行业仅仅是开始:区块链可以被运用的20个大型行业

当前位置: article > 正文

UNet++学习笔记(主干网络+代码)_主干网unet

作者:从前慢现在也慢 | 2024-03-31 22:21:16

赞

踩

主干网unet

论文

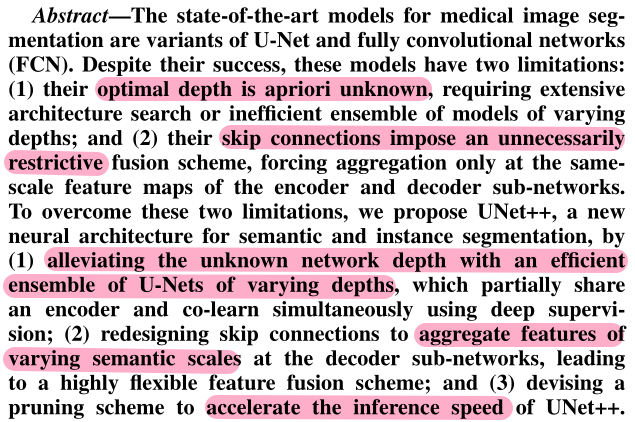

1 Abstract

文章提出,UNet主要有以下两大缺陷:

① 网络最优的深度未知,需要通过大量的实验以及集成不同深度的网络,效率低;

② skip connection引入了不必要的限制,即限制仅在相同的尺度进行特征融合。

对此,UNet++进行了以下的优化:

① 利用不同深度UNet的有效集成(这些UNet共享一个编码器),通过监督学习来搜索最优深度;

② 重新设计skip connection,使得解码器的子网络可以聚合不同尺度的特征,更加灵活;

③ 利用剪纸技术来提高UNet++的推理速度。

2 Introduction

传统的编码器解码器结构 + skip connection结构可以很好的应用于语义分割任务,原因是:其将编码器子网中的浅层细粒度信息与解码器子网中的深层粗粒度信息进行相结合。

文章的五个贡献:

① UNet++内嵌了不同深度的UNet,从而不再是固定的深度结构;

② 更加灵活的skip connection结构,不再是仅融合同一尺度的特征;

③ 设计了一个剪枝操作加快推理速度;

④ 同时训练内嵌的不同深度的UNet引发了UNet之间的协同训练,带来了更好的性能;

⑤ 展现了可扩展性。

3 Backbone

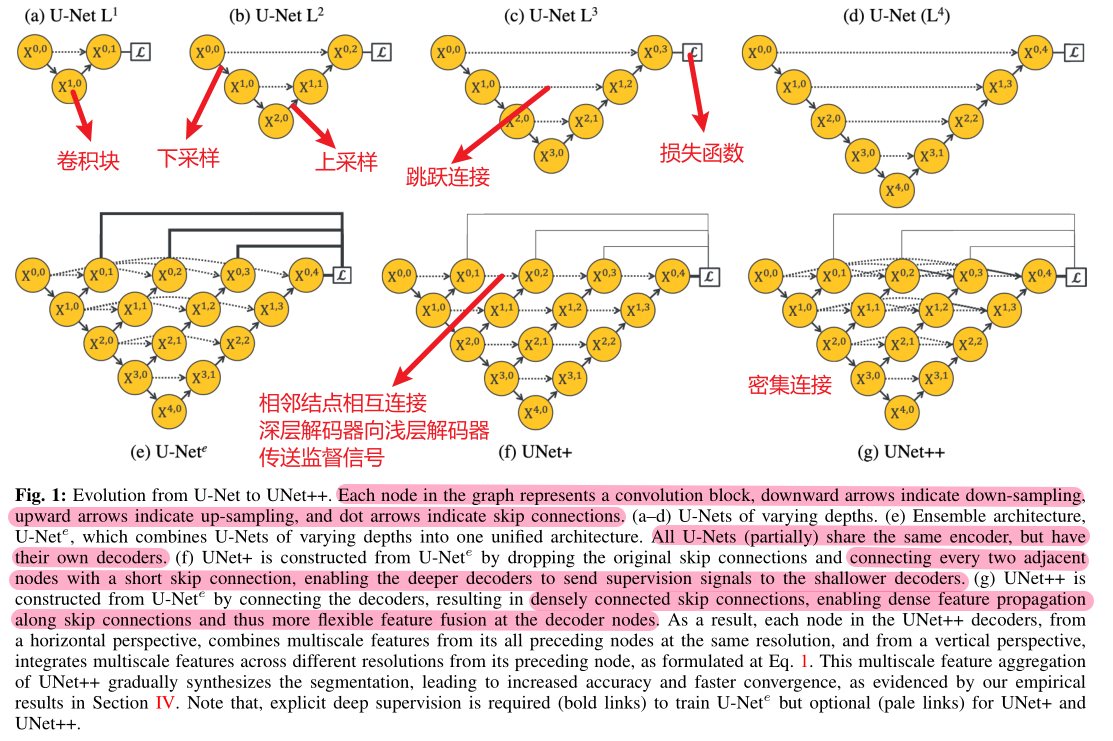

3.1 Motivation

实验发现,更深的UNet不一定更好,因此进行了多组的消融实验。

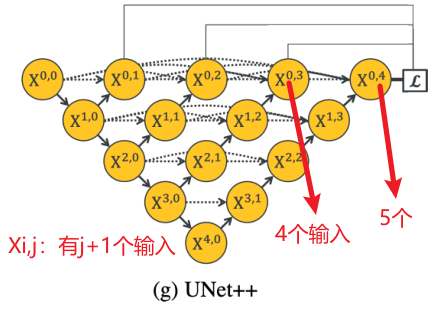

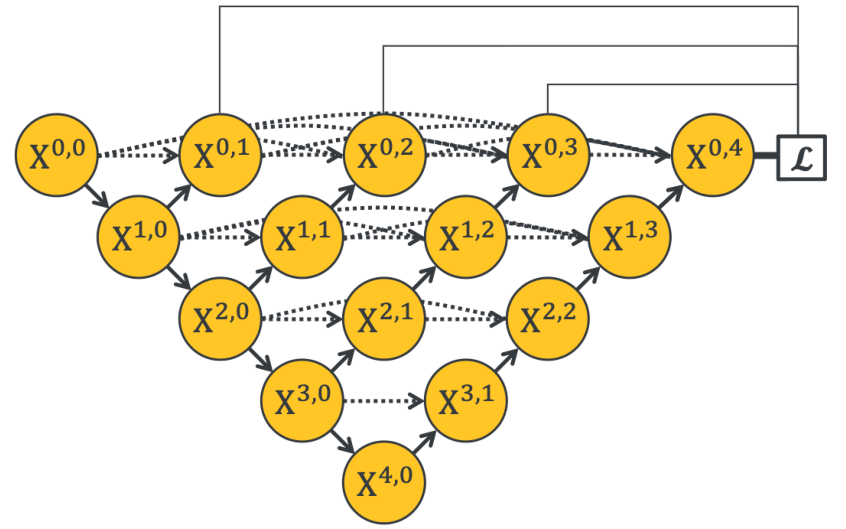

在UNete中,需要同时对X01,X02,X03和X04赋予损失函数,从而让内嵌的UNet可以回传梯度。在UNet+到UNet++的过程中,从短连接到长连接,更加有效地利用了多种特征。

3.2 Structure

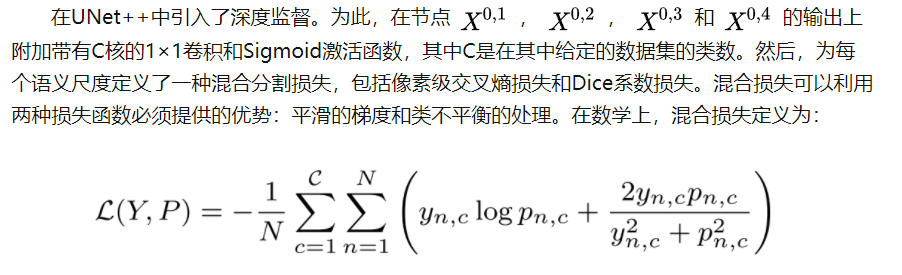

3.3 Deep supervision

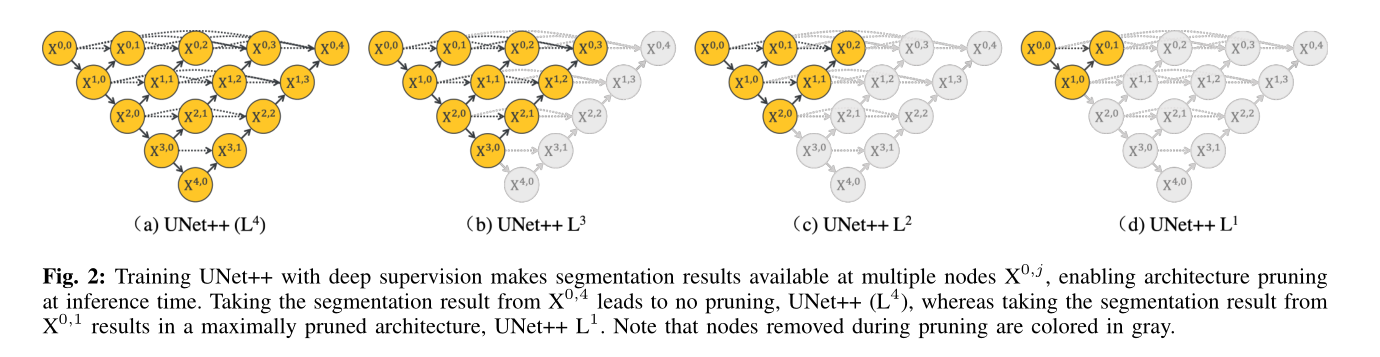

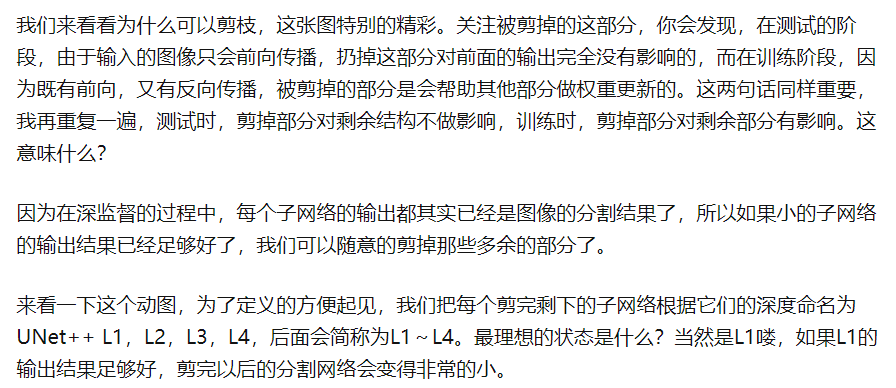

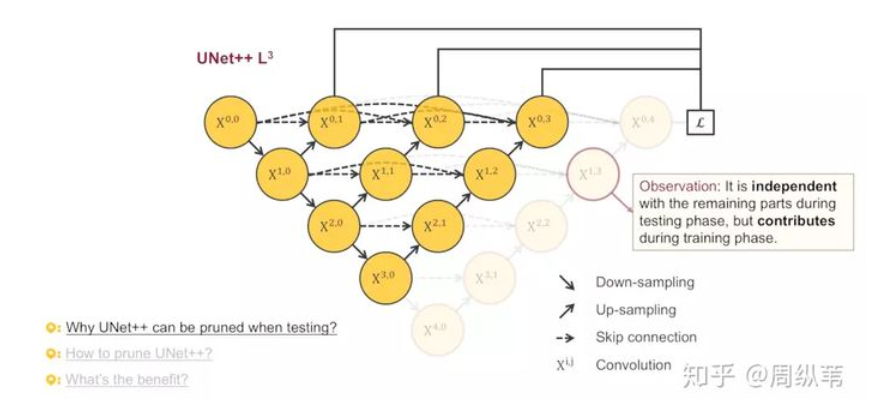

3.4 Model pruning

- 集成模式,其中收集所有分割分支的分割结果,然后取其平均值;

- 剪枝模式,分割分支,其选择决定了模型修剪的程度和速度增益,例如上图。

以下参考:研习U-Net - 知乎 (zhihu.com)

代码

# 基本的块网络,用于堆叠形成每一个卷积块 class VGGBlock(nn.Module): def __init__(self, in_channels, middle_channels, out_channels): super().__init__() self.relu = nn.ReLU(inplace=True) self.conv1 = nn.Conv2d(in_channels, middle_channels, 3, padding=1) self.bn1 = nn.BatchNorm2d(middle_channels) self.conv2 = nn.Conv2d(middle_channels, out_channels, 3, padding=1) self.bn2 = nn.BatchNorm2d(out_channels) def forward(self, x): out = self.conv1(x) out = self.bn1(out) out = self.relu(out) out = self.conv2(out) out = self.bn2(out) out = self.relu(out) return out # UNet++骨干网络 class NestedUNet(nn.Module): def __init__(self, num_classes, input_channels=3, deep_supervision=False, **kwargs): super().__init__() nb_filter = [32, 64, 128, 256, 512] self.deep_supervision = deep_supervision self.pool = nn.MaxPool2d(2, 2) self.up = nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True) # 第一斜列(左上到右下) self.conv0_0 = VGGBlock(input_channels, nb_filter[0], nb_filter[0]) self.conv1_0 = VGGBlock(nb_filter[0], nb_filter[1], nb_filter[1]) self.conv2_0 = VGGBlock(nb_filter[1], nb_filter[2], nb_filter[2]) self.conv3_0 = VGGBlock(nb_filter[2], nb_filter[3], nb_filter[3]) self.conv4_0 = VGGBlock(nb_filter[3], nb_filter[4], nb_filter[4]) # 第二斜列 self.conv0_1 = VGGBlock(nb_filter[0] * 1 + nb_filter[1], nb_filter[0], nb_filter[0]) self.conv1_1 = VGGBlock(nb_filter[1] * 1 + nb_filter[2], nb_filter[1], nb_filter[1]) self.conv2_1 = VGGBlock(nb_filter[2] * 1 + nb_filter[3], nb_filter[2], nb_filter[2]) self.conv3_1 = VGGBlock(nb_filter[3] * 1 + nb_filter[4], nb_filter[3], nb_filter[3]) # 第三斜列 self.conv0_2 = VGGBlock(nb_filter[0] * 2 + nb_filter[1], nb_filter[0], nb_filter[0]) self.conv1_2 = VGGBlock(nb_filter[1] * 2 + nb_filter[2], nb_filter[1], nb_filter[1]) self.conv2_2 = VGGBlock(nb_filter[2] * 2 + nb_filter[3], nb_filter[2], nb_filter[2]) # 第四斜列 self.conv0_3 = VGGBlock(nb_filter[0] * 3 + nb_filter[1], nb_filter[0], nb_filter[0]) self.conv1_3 = VGGBlock(nb_filter[1] * 3 + nb_filter[2], nb_filter[1], nb_filter[1]) # 第五斜列 self.conv0_4 = VGGBlock(nb_filter[0] * 4 + nb_filter[1], nb_filter[0], nb_filter[0]) # 1×1卷积核 if self.deep_supervision: self.final1 = nn.Conv2d(nb_filter[0], num_classes, kernel_size=1) self.final2 = nn.Conv2d(nb_filter[0], num_classes, kernel_size=1) self.final3 = nn.Conv2d(nb_filter[0], num_classes, kernel_size=1) self.final4 = nn.Conv2d(nb_filter[0], num_classes, kernel_size=1) else: self.final = nn.Conv2d(nb_filter[0], num_classes, kernel_size=1) def forward(self, x): x0_0 = self.conv0_0(x) x1_0 = self.conv1_0(self.pool(x0_0)) x0_1 = self.conv0_1(torch.cat([x0_0, self.up(x1_0)], 1)) x2_0 = self.conv2_0(self.pool(x1_0)) x1_1 = self.conv1_1(torch.cat([x1_0, self.up(x2_0)], 1)) x0_2 = self.conv0_2(torch.cat([x0_0, x0_1, self.up(x1_1)], 1)) x3_0 = self.conv3_0(self.pool(x2_0)) x2_1 = self.conv2_1(torch.cat([x2_0, self.up(x3_0)], 1)) x1_2 = self.conv1_2(torch.cat([x1_0, x1_1, self.up(x2_1)], 1)) x0_3 = self.conv0_3(torch.cat([x0_0, x0_1, x0_2, self.up(x1_2)], 1)) x4_0 = self.conv4_0(self.pool(x3_0)) x3_1 = self.conv3_1(torch.cat([x3_0, self.up(x4_0)], 1)) x2_2 = self.conv2_2(torch.cat([x2_0, x2_1, self.up(x3_1)], 1)) x1_3 = self.conv1_3(torch.cat([x1_0, x1_1, x1_2, self.up(x2_2)], 1)) x0_4 = self.conv0_4(torch.cat([x0_0, x0_1, x0_2, x0_3, self.up(x1_3)], 1)) if self.deep_supervision: output1 = self.final1(x0_1) output2 = self.final2(x0_2) output3 = self.final3(x0_3) output4 = self.final4(x0_4) return [output1, output2, output3, output4] # 深监督,有四个损失函数共同训练 else: output = self.final(x0_4) return output

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

声明:本文内容由网友自发贡献,不代表【wpsshop博客】立场,版权归原作者所有,本站不承担相应法律责任。如您发现有侵权的内容,请联系我们。转载请注明出处:https://www.wpsshop.cn/w/从前慢现在也慢/article/detail/346237

推荐阅读

相关标签